Designing a CLI for AI agents

How we designed the Arcjet CLI in Go as a stable, defensive interface for humans and AI agents: predictable commands, machine-readable output, strict validation, and confirmation before production changes.

How we replaced a single devcontainer with isolated OrbStack VMs to run multiple parallel development environments for AI agent workflows — architecture, CLI, and tradeoffs.

For the last few years we’ve been using a local devcontainer for Arcjet’s development environment. This setup provided benefits including: consistent tooling versions across the team, a production-close replica with all services running locally, and a security boundary that keeps supply chain risks isolated from the developer's host machine.

The problem is that devcontainers are fundamentally single-instance. One container, one code checkout, one set of application services, one set of hardcoded ports. That worked fine when development was serial. It stopped working when we started running AI coding agents alongside manual development - two agents on different features, a developer running the dashboard for manual testing, all at the same time.

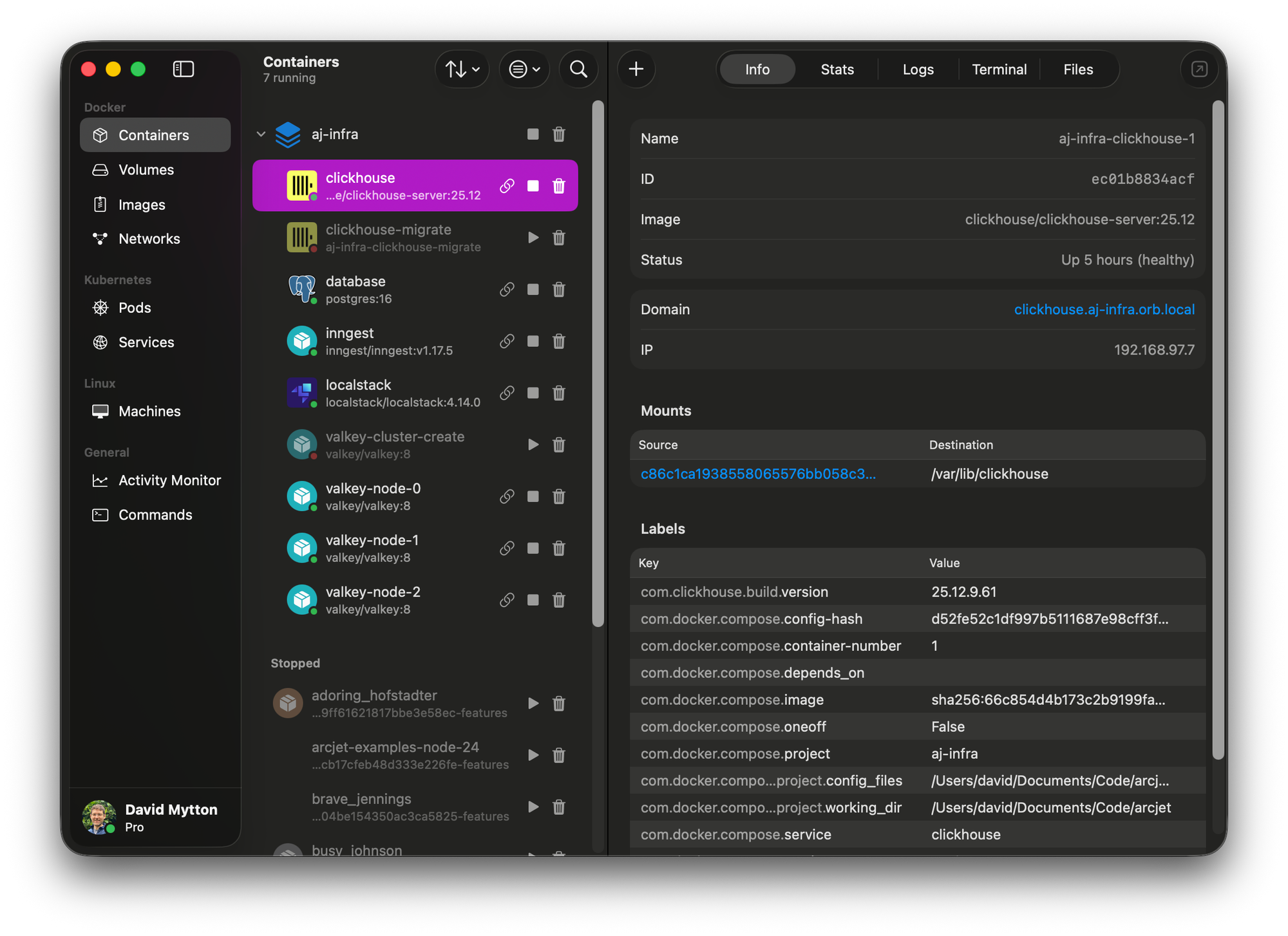

We still share infrastructure (Postgres, ClickHouse, Valkey) across all workspaces - that's a deliberate tradeoff to keep resource usage manageable on a developer laptop. The problem is application services: ports collide, Docker Compose domain names clash, and there's only one working tree to modify. Rebuilding the devcontainer to switch context took up to 20 minutes.

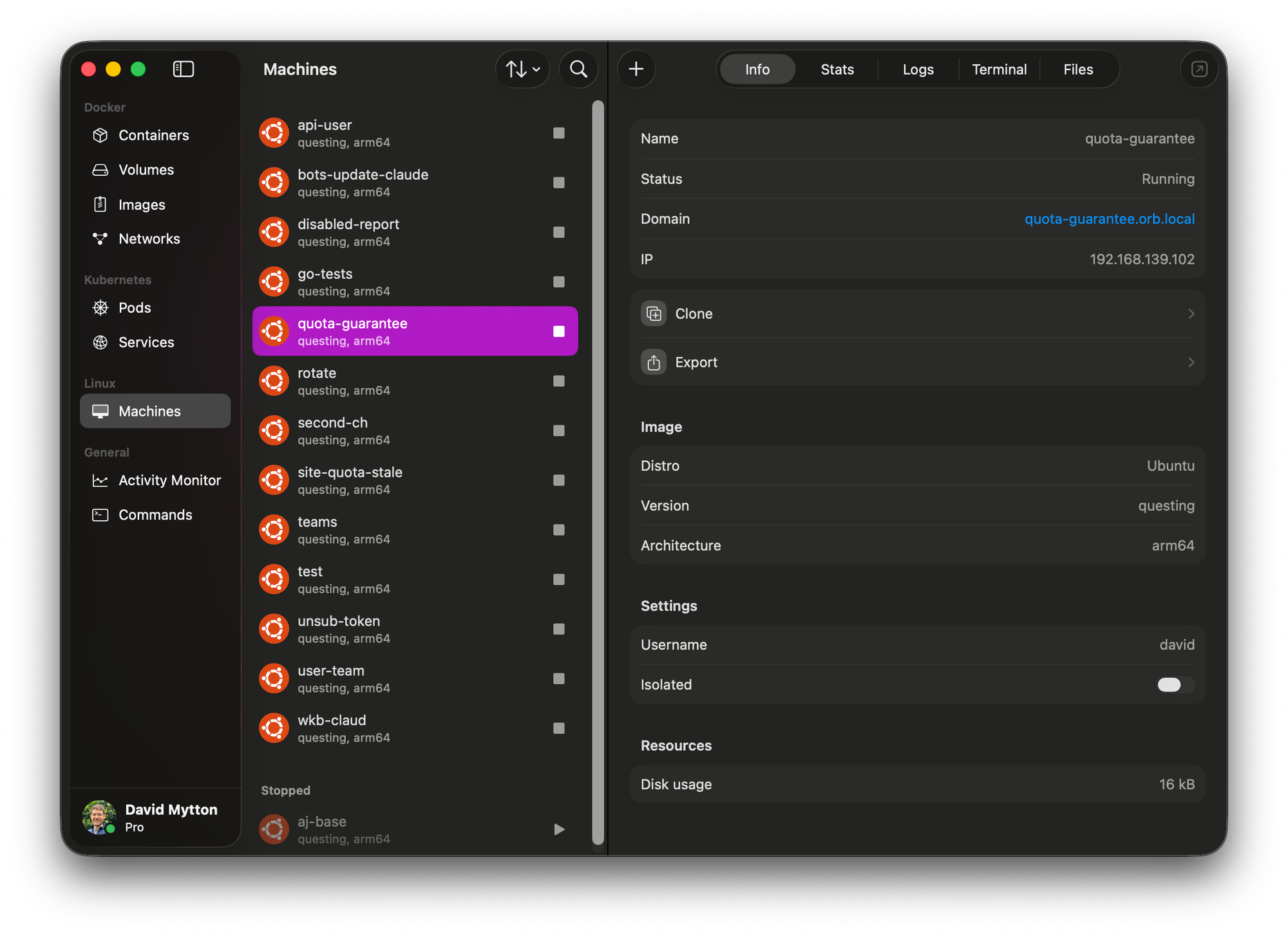

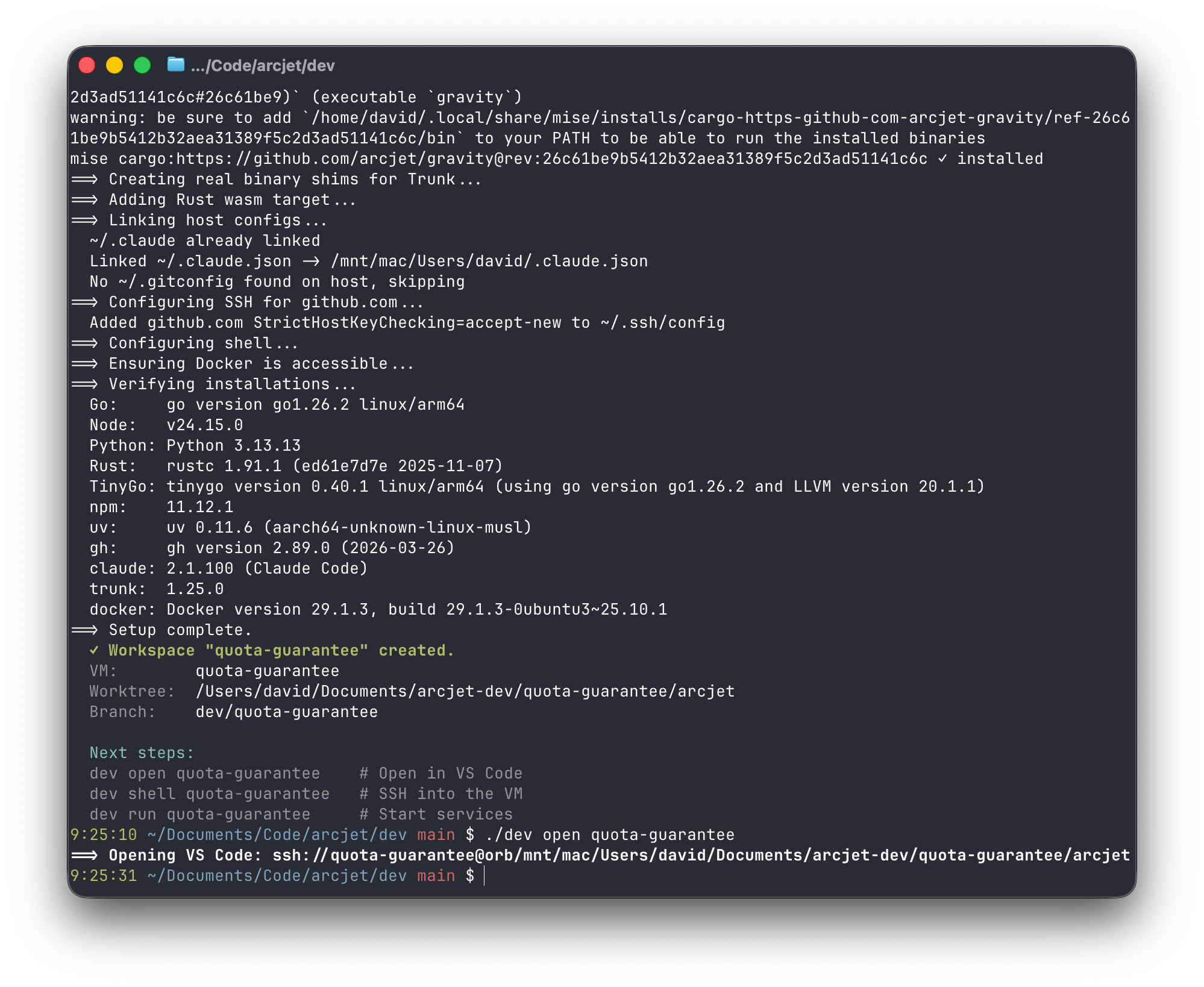

The new setup replaces the single devcontainer with isolated OrbStack VMs - one per workspace - each with its own git worktree and its own set of namespaced application services, all connecting to a shared infrastructure stack. New workspaces clone from a pre-built base VM rather than provisioning from scratch, bringing creation time down to around 5-10 seconds.

This post covers how the system is built, the key design decisions, and what we learned along the way.

The new system has three layers:

aj-base) is created once via dev base create. It provides a full toolchain - Go, Node, Python, Rust, TinyGo, Claude Code, the GitHub CLI - using mise for declarative version management, then shuts down as a clean snapshot. New workspaces clone from this base instead of provisioning from scratch, which is what gives us the biggest time savings.~/Documents/arcjet-dev/<name>/arcjet and its own Docker Compose project. Services are namespaced per workspace e.g. https://decide.agent-1.orb.local or https://api.agent-1.orb.local so multiple workspaces can run their full backend stack simultaneously without port conflicts or domain collisions. It also means we can use Orbstack’s automatic HTTPS feature to replicate production.The result is the following:

macOS (OrbStack)

├── Base template VM (aj-base)

├── Shared infra (postgres, clickhouse, valkey, localstack, inngest)

├── Workspace "agent-1" → VM + worktree + namespaced services

└── Workspace "agent-2" → VM + worktree + namespaced services

The tricky part is running multiple Docker Compose stacks without conflicts. We solve this with a workspace override file that does three things: disables the infra services (they're already running in the shared project), strips all host port bindings, and applies OrbStack domain labels to namespace each service under the workspace name. The workspace override uses Docker Compose v2.24's !reset YAML tag to clear port bindings inherited from the base config rather than trying to override them one by one.

OrbStack handles the HTTPS termination and DNS resolution for *.orb.local domains automatically - no /etc/hosts hacking or nginx config needed. This is lockin to Orbstack, but we’ve been using it successfully for 3 years now without issues and it solves a lot of pain.

Git worktrees give each workspace an isolated checkout on its own branch. When you destroy a workspace, the branch is kept by default with a prompt to delete it - a deliberate choice to avoid accidentally losing agent work. We may consider changing to Git clone using --reference in the future if we see problems with shared .git state across workspaces, but so far this hasn’t been an issue.

The workspace lifecycle is managed by a Go CLI (dev) that wraps the OrbStack and Docker Compose primitiv es into something usable:

./dev create agent-1 # clone base VM + create worktree

./dev run agent-1 all # start backend services

./dev open agent-1 # VS Code Remote SSH

./dev destroy agent-1 # tear downThe prototype was ~670 lines of bash. I rewrote it in Go for better error handling, testability, and consistency with the rest of the backend codebase.

dev manages the state explicitly so it can detect when two workspaces would conflict. The dashboard service (app) has a hard constraint: WorkOS auth callbacks are registered to app.arcjet.orb.local, so only one workspace can run it at a time. Rather than letting Docker Compose silently fail or produce confusing errors, the CLI checks for this conflict before starting services and prompts before taking it over from another workspace.

dev run <name> all starts the full backend. You can also start specific services by name - useful when an agent only needs a subset of the stack and you want to keep resource usage down.

dev open launches VS Code connected to the VM via Remote SSH. This is the full IDE experience with the repo already checked out on the right branch. dev shell is an escape hatch when you need direct terminal access instead, dropping you into a full SSH session inside the VM. Both run ssh -A so your host SSH agent is forwarded automatically. If the SSH config points IdentityAgent at 1Password (~/.1password/agent.sock) then signed commits from the VM authenticate via 1Password biometrics with no key material on disk.

dev CLI.One friction point with ephemeral VMs is authentication. Re-authenticating Claude Code and the GitHub CLI in every new workspace would be a significant overhead, especially when workspaces are created and destroyed frequently.

We solve this with symlinks. A setup.sh script links ~/.claude and ~/.claude.json inside the VM to their equivalents on the macOS host via OrbStack's /mnt/Mac filesystem mount. Claude Code's OAuth tokens, settings, MCP config, and project history are live-shared between host and VM through the symlink. Fresh VMs skip the first-run flow entirely.

For the GitHub CLI, we read a fine-grained, sandboxed GitHub token from 1Password, pass it through an SSH pipe, and run gh auth login --with-token inside the VM. The token never touches the host filesystem: it exists in process memory, in the encrypted SSH stream, and finally in the VM's own config. The host user's personal gh token, which has broader permissions, is intentionally excluded.

This is worth covering because Arcjet is a security product, and we should hold our own infrastructure to a high standard.

VM isolation is meaningfully stronger than containers. A compromised agent in one workspace can't access another workspace's VM. But the `/mnt/Mac` mount gives VMs read/write access to the macOS host filesystem - the same exposure model as the devcontainer's bind mount. That's a known tradeoff for developer experience.

The curl | sh install for mise runs inside an isolated VM, not on the host. That limits the blast radius compared to running the same install directly on macOS.

One caveat worth noting: macOS's Transparency Consent and Control (TCC) framework, which controls application access to sensitive directories like ~/Documents, treats OrbStack as the trusted process, not code running inside the VM. Malware executing inside a VM that accesses host-mounted files via `/mnt/Mac` won't trigger a separate TCC consent prompt because the I/O is proxied through the trusted runtime. This is the same exposure model as devcontainers. VM isolation is stronger against lateral movement between workspaces, but it doesn't change the host filesystem access story.

We cover our full laptop security stack - outbound firewall, TCC, 1Password SSH agent - in a separate post.

The obvious first idea: run multiple instances of the existing setup with different COMPOSE_PROJECT_NAME values. The problem is that devcontainers are designed as single-instance environments. VS Code's devcontainer extension doesn't support multiple simultaneous instances of the same config without workarounds, and docker-in-docker inside each devcontainer means nested virtualization - container inside VM inside macOS - adding latency and complexity we didn't want. Port conflict resolution would need dynamic port allocation propagated through environment variables, which the devcontainer spec doesn't support natively. We'd have been fighting the tool rather than using it.

I seriously considered cloud dev environments like GitHub Codespaces and Coder - isolated workspaces without local resource constraints sounds appealing in principle. In practice, our monorepo requires large instance sizes to be usable, and Codespaces specifically has slow startup times that would replace one latency problem with another. We may revisit a self-hosted Coder setup as we scale agent usage further, but it's not the right fit right now - the local-first approach gives us the iteration speed we need, especially when our dev team has really powerful Apple M chip laptops.

Nix flakes or devenv would give reproducible toolchains without VMs and the toolchain isolation problem is genuinely well-solved by Nix. But service isolation - running multiple backend stacks simultaneously - would still require additional tooling, and there's no equivalent to OrbStack's automatic HTTPS domain routing. Nix also has a steep learning curve that would slow down onboarding. Solving half the problem with high adoption friction didn't seem like the right trade.

Local Kubernetes (minikube, kind, Tilt) was also an option - each workspace as a Kubernetes namespace is a clean model on paper. Although we run k8s in production, it is AWS specific and I didn’t want to spend lots of time converting the Docker Compose setup to k8s just for local dev.

The OrbStack VM approach won because it solved both halves of the problem - toolchain isolation and service isolation - without requiring us to change the Docker Compose setup we already had working.

The base VM pattern is the highest-leverage optimization. Without it, every dev create would run a full toolchain install and container build, which is time consuming. With the base VM, workspace creation is a VM clone plus a git worktree checkout.

The other meaningful startup optimization is a pre-seeded go-cache volume in the base VM. First-time service startup compiles all Go services from scratch which takes 60-90 seconds. With the cache warm, it's ~10 seconds.

Per-workspace databases would be cleaner in principle but the resource costs - Postgres, ClickHouse, and Valkey together - are non-trivial, and running four or five copies on a laptop would be worse than the shared-state tradeoff.

The move from bash to Go pays dividends immediately in a CLI that other people need to use. Error messages are readable, behavior is predictable, and the test suite catches regressions. Bash is fine for one-off scripts, but it's the wrong tool for something with a dozen commands, stateful workspace tracking, and shell injection concerns. The full implementation is in the main codebase, with its own go.mod and tests.

The devcontainer served us well. The assumptions it was built on - serial development, one active context, a single developer vs a human and multiple AI agents - just don't hold anymore. The new setup is more complex, but the complexity is in the right place: in a tested CLI and a documented architecture, not in a 20-minute rebuild loop every time an agent needs a fresh branch.

How we designed the Arcjet CLI in Go as a stable, defensive interface for humans and AI agents: predictable commands, machine-readable output, strict validation, and confirmation before production changes.

How Arcjet hosts AI security models using Python, Open Inference Protocol, Go, and Modal: the architecture behind prompt injection detection.

How we defend Arcjet’s MCP tool outputs from prompt injection by separating trusted guidance from untrusted evidence in structured responses.

Get the full posts by email every week.